Vexor: Pharma Catalyst Intelligence

April 17, 2026 - One Tool A WeekAn autonomous feed that scores binary FDA catalyst events against what the options market is pricing, surfacing the gap before the science resolves.

This one is different.

Every other OTAW tool fits four constraints: a week, a stack, an audience, a remix. Vexor doesn’t. It took longer than a week. The audience is not an internal team at Symplicity. The remix is not a cheaper version of a SaaS tool a company is already paying for. It is a new product in a domain that does not currently have a good equivalent.

I kept building it anyway. The thesis behind OTAW is that a single designer with Claude can ship what used to require a team. Vexor is where that thesis meets something more ambitious than a time-off tool. If the cost-arbitrage argument holds for internal tools, the more interesting question is whether the same build style can produce a real analytical product. One where the output is not a form submission but a scored verdict that has to be right.

The Problem

Public biotech stocks trade on binary events. A Phase 3 readout lands, the FDA issues a decision, an advisory committee votes, and the stock moves 40%, 70%, sometimes more, in a single session. The event is the catalyst. Every dollar is priced against the outcome.

The people who score these events well are a small number of specialists who read trial protocols, FDA guidance documents, SEC filings, patent claims, and enforcement actions, then compare what the science supports against what the options chain is pricing. They find the gaps. That work is slow, it is expensive, and it does not scale.

Meanwhile there are retail investors and small funds holding positions in these companies with no structured way to evaluate whether the market’s implied probability matches the underlying science. They rely on Twitter threads, SeekingAlpha posts, and management press releases. Which is to say, they rely on the sources least likely to be honest about a drug that does not work.

The Solution

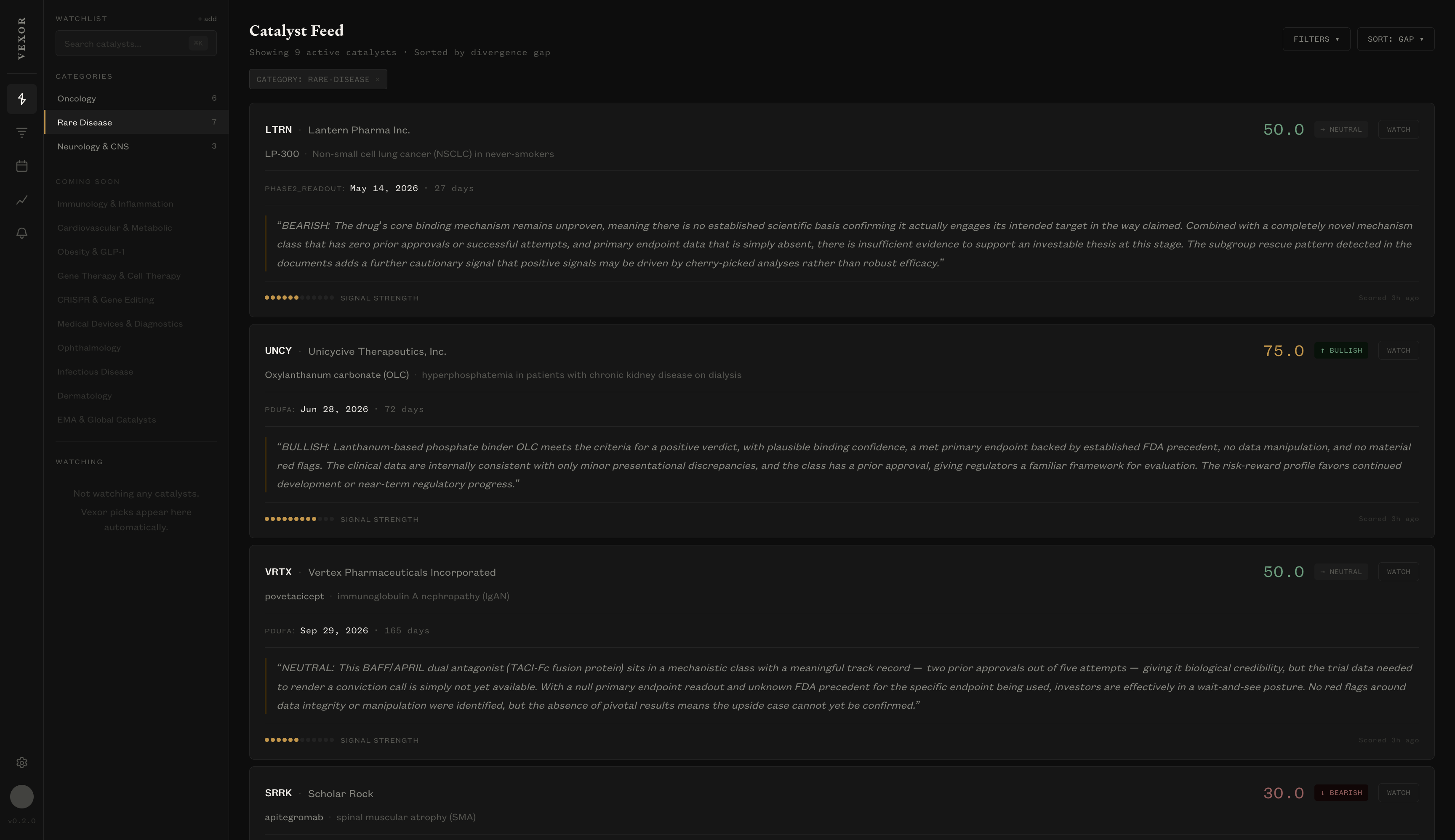

Vexor is an autonomous feed of scored pharma catalyst events.

You do not search for catalysts. The platform finds them. Three scrapers monitor SEC EDGAR, ClinicalTrials.gov, and the FDA advisory committee calendar continuously. When a new PDUFA date or Phase 3 readout shows up, Vexor pulls every relevant document. The trial protocol, prior Phase 2 results, FDA guidance for the indication, 10-K filings, enforcement actions, Form 4 insider transactions, patent claims. The full corpus runs through a five-layer analytical pipeline powered by Claude.

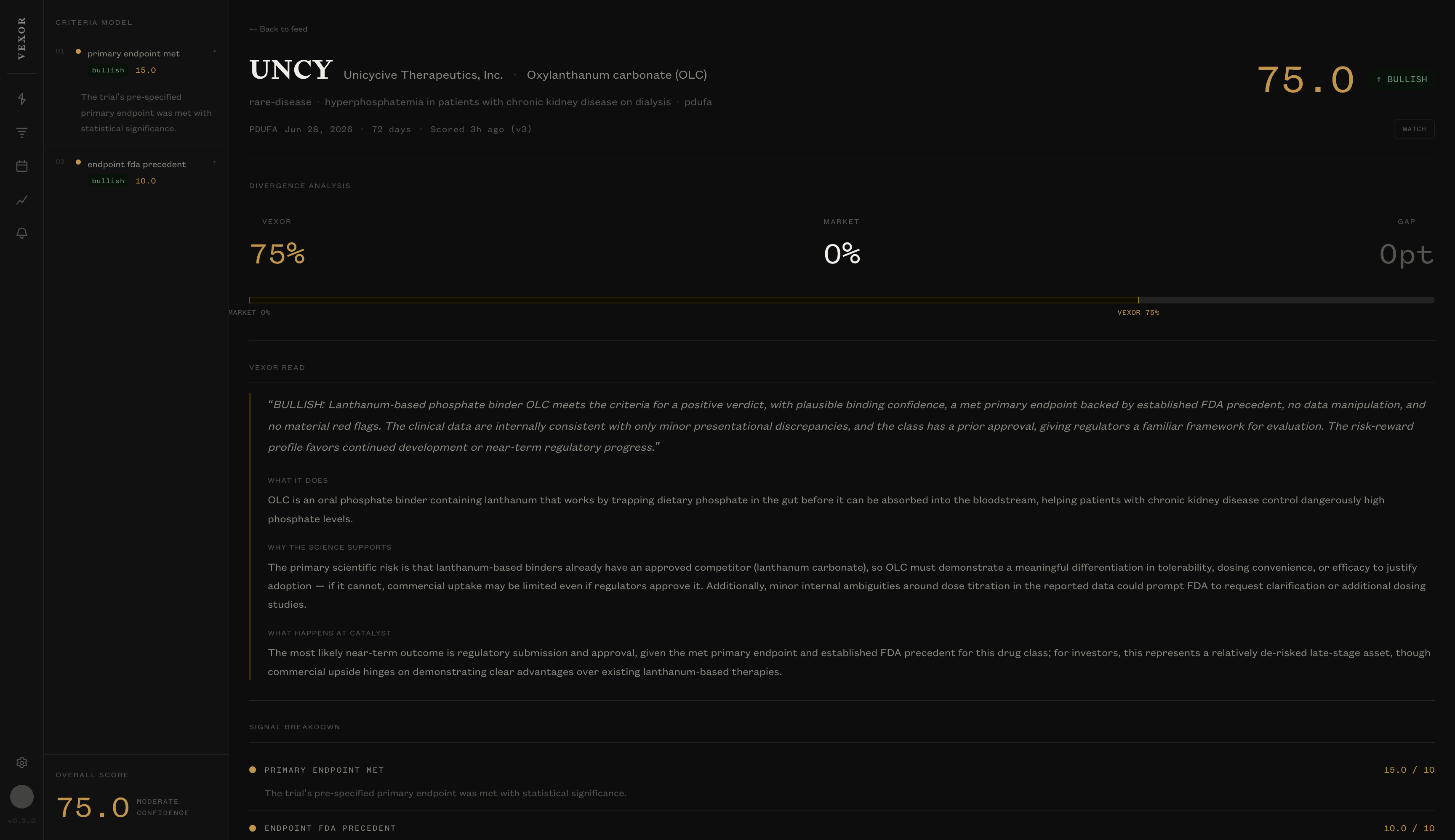

The output is a verdict card. One catalyst, one page. It tells you three things: what the drug is supposed to do, why the science does or does not support it, and what the market is pricing. The difference between the science score and the market’s implied probability is the gap. The feed is ranked by gap size. The largest divergences between what the science supports and what the market is pricing surface first.

Every verdict is versioned. When a new 8-K files or a new trial document publishes, the catalyst re-scores and the history log shows what changed and why. Nothing is lost. You can see the reasoning trail from the first score to the outcome.

What Makes It Work

The scoring pipeline separates mechanism, trial design, management credibility, financial runway, and governance into distinct analytical layers. Each one has its own document sources and structured output fields. The final composite is computed from named boolean and integer fields, not from sentiment analysis of prose. A drug with an unproven binding mechanism and a primary endpoint that missed its confidence interval produces the same bearish signal every time, regardless of how the press release is worded.

The model was validated against three resolved catalysts before I trusted any of its output: GALT/belapectin, SAVA/simufilam, MDGL/resmetirom. It produced the correct directional verdict on all three, with reasoning that tracked the actual failure and success modes in each case. That validation is the reason the product exists. Without it I would be shipping a bullshit generator dressed up as analysis.

Why It Matters

The honest version of this section: I do not know yet whether Vexor will work as a product. It is a real system with a real scoring model and real validated cases, but the test of the thesis is eighteen months of resolved catalysts with a directional accuracy rate high enough to justify the price. That is a moat you build slowly. Every catalyst that resolves adds one row to the track record and makes the argument harder to challenge. There is no shortcut.

What I can say now is that the build itself proved something I had been wondering about since Clara. The OTAW style holds up at a scope this size. Spec the thing carefully, generate against the spec, iterate with Claude Code. Vexor has five analytical layers, three scrapers, a TimescaleDB hypertable for score history, a Qdrant index for document retrieval, and idempotent pipeline steps so that every stage can be safely retried. None of that is a one-weekend build. All of it is the same workflow as the smaller tools, scaled up.

What I Learned

The constraints of OTAW are not the point. The point is the rhythm underneath them. A designer with clear thinking, a good spec, and Claude can produce a real analytical product, not just a cheaper version of something a vendor already sells. The time-off tool was the easy version of the argument. The catalyst scorer is the harder one.

If Vexor’s track record holds over the next year, it will be the most important thing on this site. If it does not, it will still be the clearest demonstration of what this build style can reach for. Both outcomes are useful. That is why I kept going.

Vexor is in private beta. Kindly reach out if you'd like to try it out.